winkComposer · open-sourcing soon

Composable Streaming

Intelligence.

Turn streaming data into actionable insights — from edge to cloud. A high-performance JavaScript framework for IIoT. Signal conditioning, anomaly detection, and health assessment, underpinned by neural-network intelligence.

The same pipeline runs on a Raspberry Pi, a gateway, or a server.

What it surfaces

Every stream has a story.

Composer reveals it.

Two recurring patterns — drift and classification. The hero already shows a third: bearing degradation on real NASA data. More use cases span telematics, HVAC, and IT infrastructure.

A control loop hides what variance reveals.

A catalyst slowly degrades in the Tennessee Eastman benchmark. Temperature stays flat — the control loop compensates. Pressure oscillations grow from ±1 to ±50 kPa. Three detectors catch the instability before the reactor trips.

Average speed says nothing. Its behaviour says the road.

Real GPS from a truck on an Indian highway averages 40 km/h whether it’s cruising or crawling through jams. A flow reads the same speed stream three ways and fuses them into Highway, City, or Jam.

WiFi AP health, wash-cycle states, server latency, and more — the library grows with the community.

Explore all use cases →How it fits

One moving part.

Composer is the processing layer in a stack of proven, boring, open-source parts. No re-platforming — it slots into the brokers, historians, and dashboards you already run.

The output layer

Your operations,

at a glance.

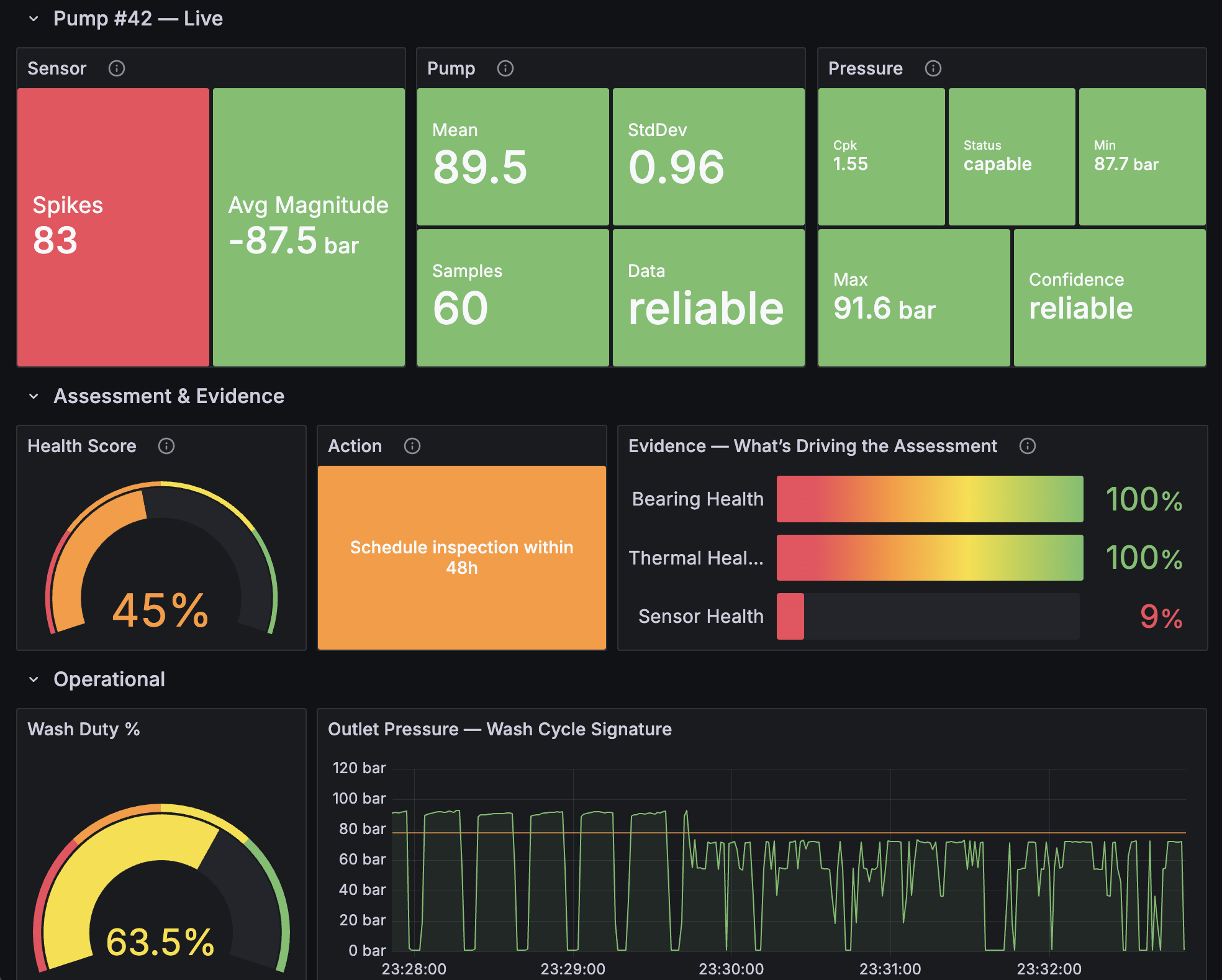

Composer computes. QuestDB stores. Mosquitto alerts in real time. Grafana shows. Health scores, evidence, cycle signatures — the same intelligence your pipeline produces, in dashboards your team already knows.

winkComposer · QuestDB · Mosquitto · Grafana — open source, end to end

Express what, not how

Building blocks.

Endless combinations.

Each block, or "node", does one thing well: connect, filter, smooth, detect, classify, act, and more. Compose them through one declarative flow language into any pipeline you need.

Read the flow languageAI-native · via MCP

Your fleet,

in plain English.

Every insight is pre-computed, typed, and stored. Any LLM retrieves the facts via the Model Context Protocol — then reasons over them. No prompt engineering. No sampling. No hallucinated metrics.

How MCP grounding worksProven at scale

Same engine.

Any scale.

An 8-node pipeline, benchmarked end-to-end. Your flow runs the same whether you ship it to a Pi in a gatehouse or a server tracking a full fleet.

Same engine powers every use case on this site — in your browser.

Transitioning to open source

Follow the journey.

winkComposer is in active development. Interfaces will break, details will go stale, whole sections will reshape. If something looks wrong — please tell us.